JavaScript SEO and Rendering: How Search Engines See Your Modern App

A modern web app makes a quiet bet. The HTML that ships from the server is small, almost empty. The actual page is built in the browser, by JavaScript that runs after the page loads. For users on a fast laptop with a good network, this works beautifully. For search engines, it sometimes does not work at all.

This guide walks through how Google actually renders JavaScript today, the two wave model that explains why some pages take longer to be indexed, the four common rendering strategies and when each one fits, the patterns that quietly break indexing on JavaScript heavy sites, what AI crawlers do and do not execute, how to test what Google really sees, and a decision framework that turns rendering choices from religion into engineering.

Rendering in 2026, Briefly

When a page is requested, something has to turn code and data into HTML. That something can sit on a server, run during a build, or run in the user’s browser. The choice shapes performance, complexity, and search visibility.

Five years ago this was a debate between two camps. Server side rendering versus client side rendering, with strong opinions on both ends. The conversation has matured. The honest answer in 2026 is that the question itself is wrong. Different parts of the same site need different strategies. A marketing landing page is not a logged in dashboard. A product detail page is not a search results screen. The right rendering choice depends on what the page does, who reads it, and how often the underlying data changes.

For SEO specifically, three constraints shape the answer. Search engines need to find the content. AI engines need to read the content. Both need to do this within a budget of patience that is shorter than a human visitor’s. A page that looks complete to a human after three seconds may still be invisible to a crawler that gave up at one second.

The Two Wave Indexing Model

Google’s public documentation has, for years, explained indexing as a two wave process for JavaScript heavy pages.

In the first wave, Googlebot fetches the raw HTML and indexes whatever it finds there. If the HTML is mostly empty (a <div id="root"> with a script tag and not much else), the first wave indexes very little.

In the second wave, the page joins a render queue. The Web Rendering Service runs the JavaScript, renders the page like a real browser would, and produces the final HTML. Then that final HTML is indexed. Only at this point does the JavaScript built content become visible to search.

The gap between the two waves used to be measured in days or weeks. By 2026, Google has compressed this for most domains down to minutes or hours, but the model still exists. For low priority pages on smaller sites, the second wave can still trail.

What this means in practice is that JavaScript content is not invisible to Google, but it is delayed and budgeted. Pages with valuable content available in the first wave HTML get indexed faster and ranked sooner. Pages where everything depends on the second wave are at a disadvantage, particularly for time sensitive content like news, pricing, and inventory.

The same logic applies to other search engines and to crawl based AI systems, with one important difference covered later, AI crawlers run JavaScript far less reliably than Googlebot does.

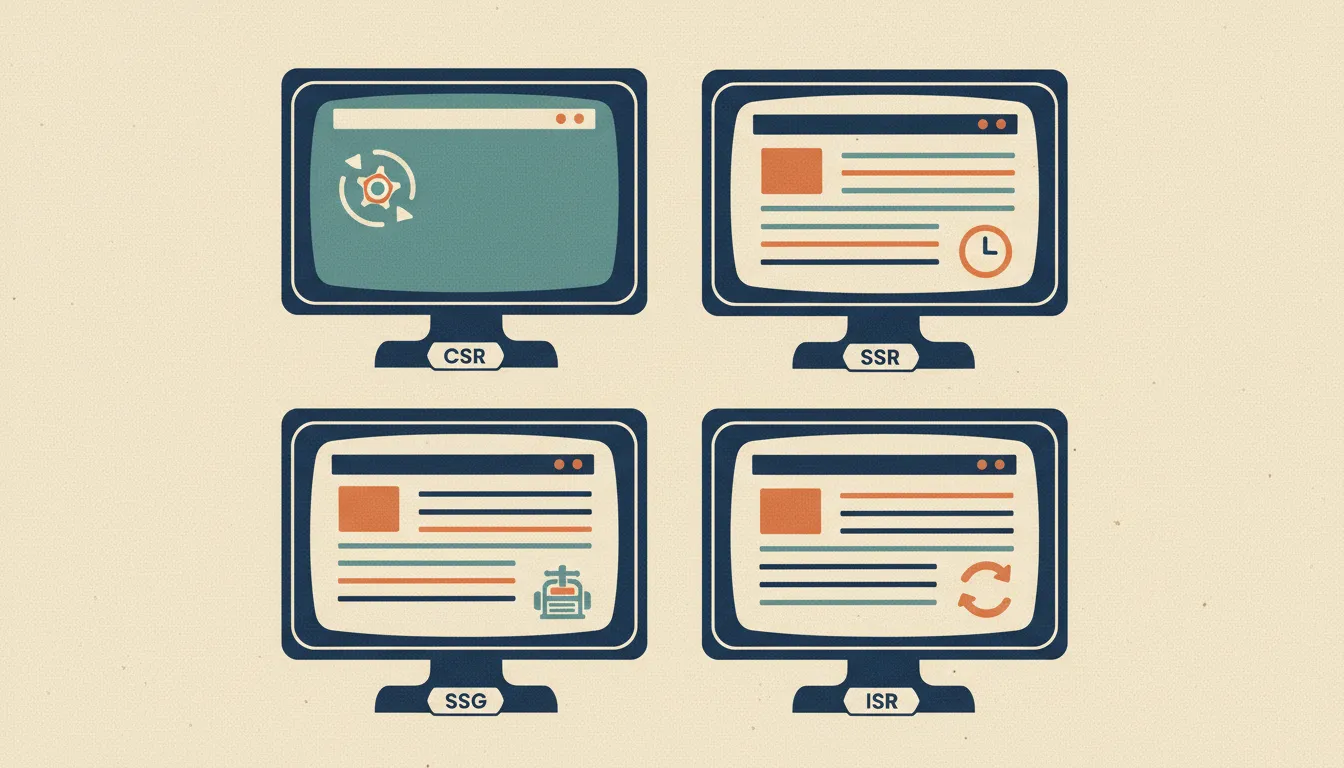

Four Rendering Strategies

Modern frameworks expose four common strategies. They are not mutually exclusive. A real site usually uses two or three for different parts of the same app.

Client Side Rendering (CSR). The server sends a minimal HTML shell. The browser downloads JavaScript, fetches data, and assembles the page. Examples, a classic React or Vue single page application without any framework on top.

For SEO, CSR is the riskiest option. Content depends entirely on JavaScript execution, which requires the second indexing wave for Google and is unreliable for AI crawlers and smaller search engines. Use CSR for pages that do not need search visibility, like a logged in app dashboard, a settings panel, or a tool interface behind authentication.

Server Side Rendering (SSR). On every request, the server runs the application code and returns fully rendered HTML. The browser also receives the JavaScript bundle so the page becomes interactive after hydration.

For SEO, SSR is the most reliable option. The first wave already has the full content. Search engines and AI crawlers see what they need without executing JavaScript. The cost is server load, every request is a render, and infrastructure has to scale with traffic. Use SSR for pages where content changes per request and freshness matters, like personalized feeds, real time pricing, search results pages, and content gated by request parameters.

Static Site Generation (SSG). At build time, every page is rendered to HTML once and saved. The deployed site is a folder of static HTML files served by a CDN. Examples, Astro, Next.js with output: "export", Hugo, Jekyll.

For SEO, SSG is the fastest and most reliable option. The HTML is already there before any user arrives, so first wave indexing captures the full content immediately. The cost is build time growth, a 50,000 page site can take an hour to build. Use SSG for pages where content changes rarely between deployments, like blog posts, documentation, marketing pages, and product catalogs that rebuild on a schedule.

Incremental Static Regeneration (ISR). A hybrid. Pages are served as static HTML, but each page has a revalidation interval. After the interval expires, the next request triggers a background regeneration, and subsequent requests get the new version. Examples, Next.js ISR, Astro server endpoints with cache headers.

For SEO, ISR matches SSG’s first wave reliability while allowing content to update without a full rebuild. The cost is operational complexity, cache invalidation strategies, and stale content windows. Use ISR for sites with too many pages for a feasible full rebuild but content fresher than a marketing site, like e commerce catalogs with thousands of products that update prices a few times a day.

A summary table:

| Strategy | First wave SEO | Build time | Server load | Best for |

|---|---|---|---|---|

| CSR | Risky | None | None | Logged in apps |

| SSR | Reliable | None | High | Personalized, real time |

| SSG | Reliable | High | None | Stable content at scale |

| ISR | Reliable | Medium | Medium | Frequently updated catalogs |

Patterns That Quietly Break Indexing

The strategies above are clean in theory. In production, specific patterns break indexing even on sites that intended to do the right thing.

Content rendered after a user interaction. A page loads with a teaser, and the full article appears only after the user clicks “Read more.” Googlebot does not click. Whatever is hidden behind the click is invisible to search. The fix is to render the full content in the initial HTML and use CSS or JavaScript to collapse it visually for users.

Lazy loading triggered by scroll. A long page renders the first viewport, and additional sections appear as the user scrolls. Googlebot does not scroll like a user, it renders the page at a tall viewport (around 12,000 pixels by default) and indexes what is visible. Content that requires real scrolling beyond that height is at risk. The fix is to use the loading="lazy" attribute on images and iframes (which Google understands) instead of JavaScript triggered scroll loading for content.

Routes that depend on browser only APIs. A route’s content fetches data using localStorage, sessionStorage, IndexedDB, or session cookies. The Web Rendering Service does not preserve state between renders, so any content that depends on browser stored data appears empty. The fix is to make sure the canonical SEO content is fetched from the server at render time, not from the browser’s storage.

Content blocked by cookie banners or paywalls. A consent banner overlays the page until the user accepts. If the rendered DOM still has the content underneath the banner, fine. If the content is removed from the DOM until consent is granted, Google sees only the banner. The fix is to make sure SEO content is in the DOM before consent, even if it is visually hidden, or use a soft consent gate that does not remove content.

Critical content in <noscript> tags. Some teams, knowing JavaScript rendering can fail, mirror their content inside <noscript> blocks. This used to work. Modern Google warnings explicitly flag heavy use of <noscript> content as low quality, because abuse patterns made it a cloaking signal. Use <noscript> for fallback notes (like a “JavaScript is required” message), not for indexable content.

JavaScript redirects. A page returns 200 OK with a <script>window.location = "/new-url"</script>. The first wave sees an empty page. The second wave eventually catches the redirect, but the original URL was indexed empty in between. The fix is HTTP 301 or 302 redirects on the server, not JavaScript navigation.

Dynamic meta tags injected after load. A React app sets document.title and updates meta tags after the route component mounts. Googlebot sees the original <title> from the static HTML, which is usually a generic site name. The page indexes with the wrong title and description. The fix is to use a head management library that injects tags during SSR, like next/head, react-helmet-async, or framework specific solutions.

Hash based routing. URLs like /products#electronics instead of /products/electronics. Google indexes the path, ignores the fragment, so all hash routes collapse into one indexed URL. The fix is HTML5 history API routing with real paths.

A reliable test, view the rendered page source from the Mobile Friendly Test tool and search for the content you expect. If a sentence from your hero copy is missing from the rendered HTML, that is a problem worth fixing before traffic does it for you. The same kind of audit benefits from a technical SEO audit checklist run periodically to catch regressions early.

AI Crawlers and JavaScript

The crawler landscape in 2026 is no longer just Googlebot. AI training and inference systems run their own crawlers, and they behave differently.

ChatGPT, Claude, Perplexity, and Gemini citations generally rely on crawlers that fetch HTML and do limited or no JavaScript execution. When an AI engine cites a page, it is almost always citing what it found in the first wave HTML. A page that requires JavaScript to render the article body is invisible to most AI training crawlers and partially visible to inference time crawlers (the ones used for live citations).

Bingbot runs a JavaScript renderer based on Microsoft Edge, similar in capability to Googlebot. Bing indexes JavaScript content, but with the same two wave delay logic.

Smaller search engines and SEO tools vary widely. Many do not run JavaScript at all and only see the raw HTML.

The takeaway is uncomfortable for SPA only sites. Even if Google now indexes JavaScript reliably, the broader ecosystem of AI engines and smaller search systems does not. A page that depends on JavaScript for its content is a page that ranks in Google but does not get cited by ChatGPT, does not appear in Bing AI summaries, and does not show up in Perplexity sources.

For sites where AI traffic and citation matter (which by 2026 is most sites with a content strategy), the practical answer is to move toward SSR or SSG for indexable pages. The principle is, anything you want a machine to read should be in the first wave HTML.

This connects directly to generative engine optimization, which depends on AI crawlers being able to read content. JavaScript rendered content that Google can see is still invisible to most AI engines, which means optimizing for AI requires solving rendering.

Testing What Search Engines Actually See

Several tools reveal what Google and other crawlers see when they fetch a page. Use them before assuming a page is indexed correctly.

Google Search Console URL Inspection. The single most important tool. For any URL on a verified property, request “Live Test” and look at the rendered HTML, the screenshot, and the JavaScript console errors Google encountered. If the rendered HTML is missing your main content, the page will index empty. If the screenshot shows a blank page, the renderer failed.

Mobile Friendly Test (search.google.com/test/mobile-friendly). Public tool, no verification required. Less detailed than Search Console, but useful for testing pages on sites you do not own and for spot checks.

Rich Results Test (search.google.com/test/rich-results). Designed for structured data, but also exposes the rendered HTML. Useful as a second opinion when URL Inspection results look ambiguous.

curl with no JavaScript. Run curl -A "Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)" https://yoursite.com/page and inspect the response. This shows what AI crawlers and any JavaScript free fetcher will see. If your hero content is missing, AI engines cannot read it.

Browser DevTools with JavaScript disabled. In Chrome DevTools, open Settings, Debugger, “Disable JavaScript”, reload the page. This is the closest thing to seeing what a non rendering crawler experiences. If the page is blank, you have a problem for any non Google crawler.

A real SEO crawler that supports JavaScript rendering. Tools like Seodisias crawl your site like Googlebot does, render the JavaScript, and report what content was extracted. This is essential for sites with hundreds or thousands of pages where manual spot checks are not feasible.

The pattern is, do not trust that JavaScript content “probably indexes.” Verify, on a sample of pages from each template, every release.

A Decision Framework

Choosing a rendering strategy becomes simpler when framed around three questions.

Question one, does this page need to rank in search?

If no (a logged in dashboard, an admin panel, an internal tool), CSR is fine and saves complexity. Skip to focusing on user experience and bundle size.

If yes, continue.

Question two, how often does the underlying content change?

If rarely (changes per deployment, or a few times a day on a small site), SSG is the cleanest answer. Build the pages, deploy them, and let the CDN do the work.

If frequently but predictably (catalogs that update prices hourly, news that publishes daily), ISR matches the cadence. Set revalidation to the smallest interval where stale content is acceptable.

If per request (personalized feeds, real time pricing, search results), SSR is required.

Question three, can the page tolerate the risk of partial indexing for AI engines?

If the page is for Google only and you accept losing AI citations, CSR with content rendering after load might be acceptable.

If the page is part of a content strategy that includes AI engine visibility, the answer must include SSR or SSG. AI crawlers do not run JavaScript reliably, and that is a hard constraint, not a preference.

A summary table:

| Need to rank? | Change frequency | AI visibility needed? | Strategy |

|---|---|---|---|

| No | Any | No | CSR |

| Yes | Per request | Yes | SSR |

| Yes | Predictable, frequent | Yes | ISR |

| Yes | Rare | Yes | SSG |

| Yes | Mixed | Yes | SSR for hot pages, SSG for stable, ISR for catalogs |

A modern site usually ends up with the last row. Marketing pages and blog posts as SSG. Product catalogs as ISR. Search and personalized pages as SSR. Logged in app shell as CSR. Each part of the site uses what fits, and the SEO surface stays in the first wave HTML.

This decision shape connects with crawl budget optimization, because a site that mixes rendering strategies thoughtfully also burns less crawl budget on slow JavaScript renders. Pages that load complete in the first wave save Googlebot a render trip, which means more pages can be crawled in the same budget.

What to Build Next

The rendering choice is not the last technical SEO decision a site makes, but it is one of the earliest, and it shapes most of the rest. Internal links between SSG pages are cheap. Internal links into a CSR app shell rarely register. Schema markup on an SSR page is read by every crawler. Schema markup injected by JavaScript on a CSR page is read by Google and few others.

If a site you maintain still leans on CSR for indexable pages, treat the migration to SSR or SSG as a foundation move. The visibility gains compound, every other technical SEO improvement (canonical tags, internal linking, schema, hreflang) lands on stronger ground when the content is in the first wave HTML.

For a deeper look at what crawlers actually do once they reach a page, the SEO crawler complete guide explains how crawlers traverse, render, and report on JavaScript heavy sites. For an audit framework that includes rendering checks, the technical SEO audit checklist has a JavaScript section worth running every quarter. And for a tool that crawls with a real browser engine and reports what content was extracted, Seodisias is built to surface the gap between what your code produces and what a search or AI crawler actually sees.

The site that wins the next era of search is not the site with the cleanest architecture. It is the site whose content shows up in the first wave HTML, every time, for every crawler that matters.