Internal Linking as a Crawl Budget Tool: Patterns, Anchors, Audits

Internal links are the wiring of a website. Every link from one page to another tells a search engine where to go next, how often to come back, and which pages matter most. Get the wiring right and the crawler reaches what you want indexed, in the order that reflects priority. Get it wrong and the most important pages stay buried under five clicks of navigation while thin pages take the front row.

Most internal linking advice frames the topic around ranking signals or anchor text alone. Both matter, but the deeper job of internal links is structural. They are the primary lever for how a crawler spends its budget on your site. This guide walks through five patterns that solve real problems, the anchor text rules that hold up at scale, the topic cluster shape that aligns with how AI search reads sites, the orphan and link depth issues that quietly degrade visibility, and the audit routine that keeps it all in shape.

Internal Links as a Crawl Budget Tool

A crawler arrives at your site with a finite budget. Googlebot, Bingbot, GPTBot, ClaudeBot, all of them have a limit on how many requests they will make in a session. Where they spend that budget is shaped by your site map, your robots.txt, and most of all by the links the crawler finds inside your pages.

A page with many incoming internal links from your own site signals importance. The crawler revisits it more often. A page with one or two incoming links sits at the edge of the budget, recrawled rarely. A page with zero incoming links is invisible unless it appears in the sitemap, and even then it is treated as a low priority discovery.

This means every internal link is also a budget decision. Linking from your homepage to a deep blog post pulls that post into a high attention zone. Letting a category page acquire fifty links to long tail variants wastes budget on pages that probably should not exist. The same crawl budget logic that drives crawl budget optimization on the technical side ties directly into how you wire your internal links.

For large sites, internal linking is the single highest leverage technical SEO control after a clean robots.txt and sitemap. For small sites, it is the difference between a clean architecture and a tangle that even you cannot navigate after a year of editorial drift.

Five Patterns That Solve Real Problems

The five patterns below cover almost every real internal linking decision a content site or commerce site has to make. They are not exhaustive, they are the high return ones.

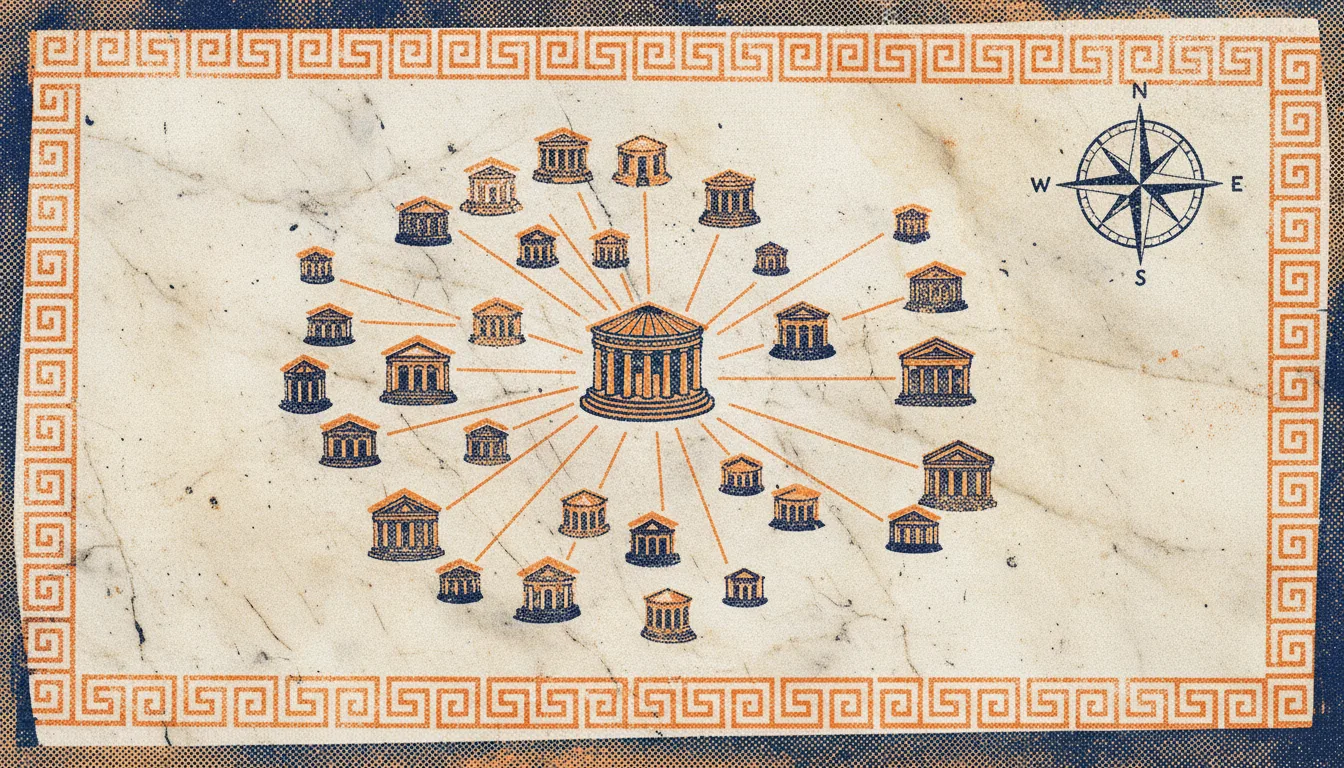

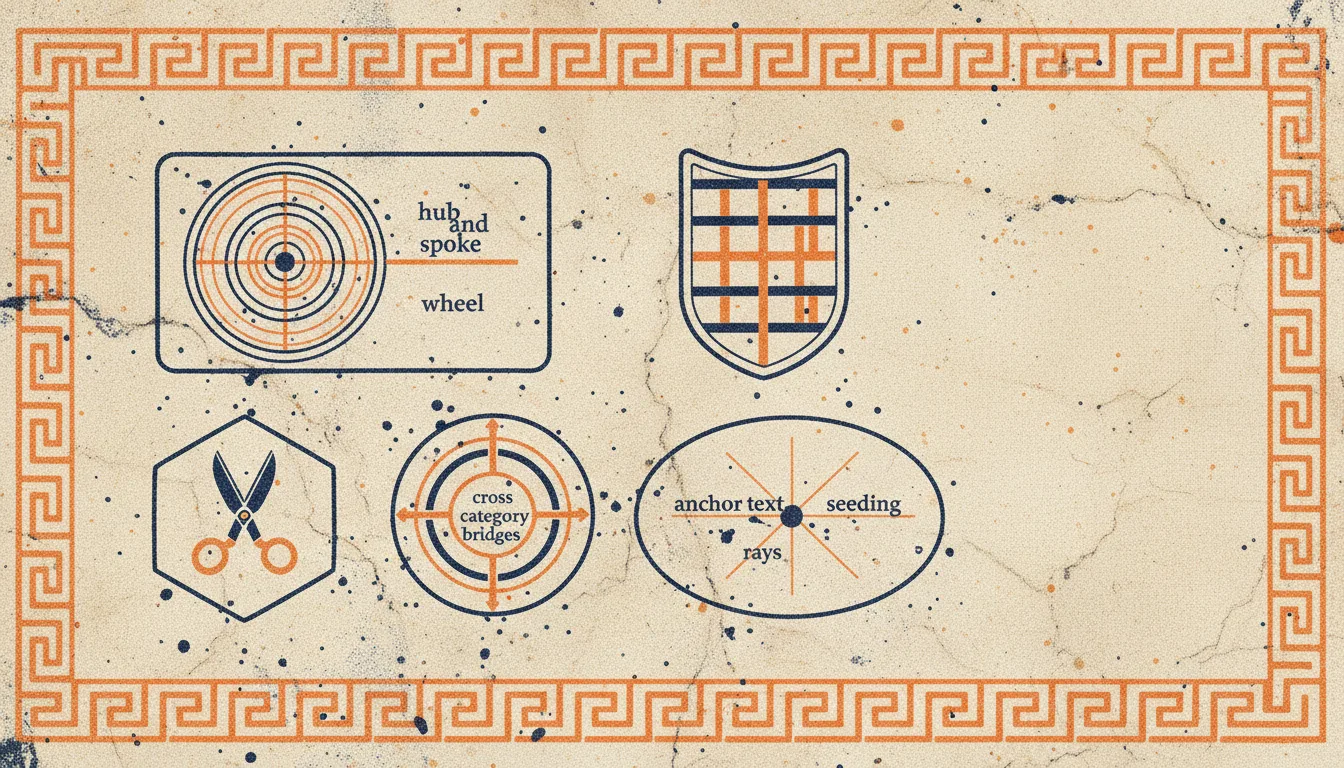

Hub and spoke. A pillar page covers a broad topic. Each subtopic gets its own page, linked from the pillar. The subtopic pages link back to the pillar. This is the simplest cluster pattern, easy to plan and easy to audit. Use it for any topic that has a clear center and several specific facets.

Depth pruning. The deeper a page sits in your link graph, the less crawl attention it receives. If a critical conversion page is four clicks deep from the homepage, lift it. Add direct links from the homepage, the global footer, or a high traffic blog post. Link depth is something you measure and shrink, not something the CMS decides for you.

Cross category bridges. Sites with multiple categories often build silos that never connect. A page in category A never links to a related page in category B, even when readers naturally move between them. Add bridges. They distribute crawl attention beyond a single category and they reflect how readers actually behave.

Anchor text seeding. Every link text is a signal. Use descriptive anchor text that matches the target page’s intent. If the target page covers crawl budget, link with the words “crawl budget”, not “click here”. This pattern is small per link, but at scale it adds up to a strong topical signal.

Pruning unproductive links. Not every internal link helps. Links to noindex pages, redirects, and 404s drain crawl budget without giving anything back. So do excessive duplicate links from a single page. Periodic pruning is part of the pattern set, not a separate maintenance task.

Anchor Text in Practice

Anchor text is one of the few signals where you can be precise without manipulation. The text inside an <a> tag tells a crawler what the target page is about. It also tells the user, which is the more important purpose.

The rules that hold up across years of guidance:

- Descriptive over generic. “Read our crawl budget guide” is better than “click here”. The first carries meaning, the second wastes the link.

- Vary naturally across pages. Linking to the same destination with the exact same anchor from twenty pages looks engineered. Vary the phrasing while staying topical. “Crawl budget”, “crawl budget optimization”, “how Google decides what to crawl” all point at the same concept without sounding stamped.

- Avoid keyword stuffing. A long string of keywords as anchor reads as spam. One descriptive phrase is enough.

- Match user intent. The anchor should reflect what the reader will get if they click. Misleading anchors hurt trust and increase bounce.

Anchor text quality shows up in audits as a clean histogram. When you visualize all anchors pointing to a single page, a healthy site has a moderate spread of related phrasings around a clear topical center. A site with weak signals shows random terms scattered across the histogram with no concentration.

Topic Clusters and Silos

A topic cluster is a network of pages organized around a single broad topic, with a pillar page at the center and several supporting articles linking in and out. The structure mirrors how AI search engines parse a site for topical authority. A site with one essay about robots.txt is treated as one essay. A site with a cluster of twelve interconnected pages on crawl budget, robots.txt, sitemaps, redirect chains, and link depth is treated as authoritative on technical SEO crawling.

The cluster pattern earns its keep on three fronts. Search engines see the topical concentration. AI engines like ChatGPT, Perplexity, and Claude lean toward sites with comprehensive coverage when picking citations. And readers who land on one page in a cluster have an obvious next step into a related page, which extends session depth and reduces drop off.

Silos are a stricter version of clusters. In a silo, internal links never cross category boundaries. The category for crawl budget links only to other crawl budget pages, never to schema or hreflang content. Silos were popular in older SEO advice, but they often work against the way modern readers and AI crawlers move. A site that is both organized and bridged across topics tends to perform better than a site that is rigidly siloed.

The practical takeaway: cluster, do not isolate. Build clusters around your core topics. Add cross cluster links where the relationship is real. Avoid forcing cross links that do not serve a reader.

Orphan Pages

An orphan page is a page on your site with no internal links pointing to it. The crawler can only reach an orphan page through the sitemap, and even then the page is treated as low priority. Orphans accumulate quietly, especially on sites with frequent editorial activity. A blog post deleted from the navigation menu but kept live, a landing page from an old campaign, a category that was renamed but not relinked, all of these become orphans without anyone noticing.

Orphans are bad for two reasons. The page itself loses crawl frequency, so updates take longer to reach the index. And the orphan is often a sign of a deeper issue, that no other page on your site considers this content worth pointing to. If your own site does not link to it, why should a search engine treat it as important?

The fix is mechanical. Run a full crawl, compare the crawl results against the sitemap and the URL list from your CMS, and list every URL that exists but has no internal incoming links. For each orphan, decide: should it be linked from somewhere, redirected to a relevant live page, or removed entirely? A page that does not earn a link does not earn a place in the live site.

Link Depth

Link depth is the number of clicks from the homepage to a target page. Pages at depth one (direct from homepage) receive the most crawl attention. Pages at depth four or beyond receive far less. Pages at depth six or more are crawled rarely enough that updates can take weeks to reach the index.

Three to four clicks deep is a good upper bound for important pages on most sites. Anything deeper needs a reason. A category that is genuinely four levels in a hierarchy can sit at depth four. A blog post that is four clicks deep because pagination buried it is a problem.

The fix is direct linking. Add internal links from high traffic pages, from contextually relevant content, or from the global navigation. The goal is not to flatten everything to depth one, that breaks readability. The goal is to make sure no important page sits behind unnecessary clicks.

A practical visual: an SEO crawler can produce a link depth distribution for your entire site. A healthy distribution has most pages clustered between depth two and depth four, a small tail at depth five and beyond, and zero pages at depth seven or more.

Auditing Internal Links at Scale

Manual review of internal links does not survive past a hundred pages. Auditing is a crawl problem, not a spreadsheet problem.

A desktop crawler like Seodisias walks every internal link on your site and produces three views that matter for this work.

Inbound link counts per URL. For each page, how many other pages on your site link to it. Sort ascending to surface orphans (zero inbound) and near orphans (one or two inbound). Sort descending to confirm that the pages with the most inbound links are actually the ones you want highlighted. If a thin tag page has a hundred inbound links and your conversion page has three, that is a structural problem.

Link depth. For each page, the shortest path from the homepage. Filter for pages at depth four or more. Decide for each one whether it deserves the depth or needs a shorter path.

Anchor text aggregation. For each target URL, the list of anchor texts that link to it. Look for descriptive consistency. Pages whose anchors are mostly “click here” or generic phrasing need attention.

The same crawler also catches the technical noise around internal linking, broken links that need redirect or removal, redirect chains that drain crawl budget through your own internal navigation, and pages that link to noindex destinations.

For sites with active editorial work, run this audit monthly. For sites in active migration or after a major restructure, run it weekly. The goal is not perfection, it is to keep drift small enough that small fixes are always possible.

Patterns That Survive Site Growth

A small site can be wired by hand. A site with five thousand pages cannot. Patterns that survive growth share three properties.

They are systematic. The placement of internal links is decided by the template, not by human judgment. A blog post template that automatically links to the parent category, the latest three posts in the same category, and the pillar page produces consistent linking without editorial overhead.

They are auditable. The pattern produces a link graph that an SEO crawler can verify. If a template should add five links and the crawler finds three, the template is broken and needs investigation.

They tolerate drift. Editorial teams will eventually link in ways the pattern did not anticipate. The pattern needs to absorb those links without breaking. A pattern that depends on a fixed link count per page breaks the moment someone adds a contextual link in the body. A pattern that says “every page must link to the parent category” does not.

The combination of templated structural links plus editorial contextual links is what most sites end up with. The structural part is governed by the CMS. The contextual part is governed by writers using descriptive anchors and following the cluster shape. Both parts are visible to the crawler, both contribute to crawl budget allocation, both need periodic audits.

Conclusion

Internal linking sits at the intersection of architecture, editorial practice, and crawl budget. The patterns are small individually but compound. A homepage that surfaces the pillar pages, clusters that connect supporting content, anchors that carry meaning, depth that respects priority, and an audit routine that catches drift, those five elements together shape how every search engine and AI engine experiences your site.

For deeper coordination, pair internal link audits with checks on crawl budget, ecommerce crawl budget, and your SEO crawler routine. The internal link graph is one of the four or five technical SEO levers that matters at every site size, and one of the few that responds to deliberate work over months rather than years.

If you need a tool to run the crawl, download Seodisias for free. It works locally on your machine, has no URL limits, and surfaces inbound counts, link depth, and anchor text distributions as part of every audit.